AI is making people faster.

The question is whether it is also making them less critical.

If Shakespeare existed today, he would probably rewrite one of his most famous lines like this:

“To use AI, or not to use it? That is the question.”

Because we are living through a strange moment in history: AI is making people dramatically more productive while simultaneously creating new risks around dependency, loss of critical thinking, and intellectual passivity.

Studies from Harvard University, Massachusetts Institute of Technology, Microsoft Research, and others are beginning to reveal a more complex reality. On one hand, AI clearly improves efficiency and accelerates operational work. On the other, researchers are already warning about the “passive copilot” effect: the tendency to stop questioning answers simply because a machine delivered them confidently.

So the question is no longer whether we should use AI.

The real question is: how do we use AI without outsourcing our judgment to it?

I want to share two frameworks that I found especially useful for answering that question.

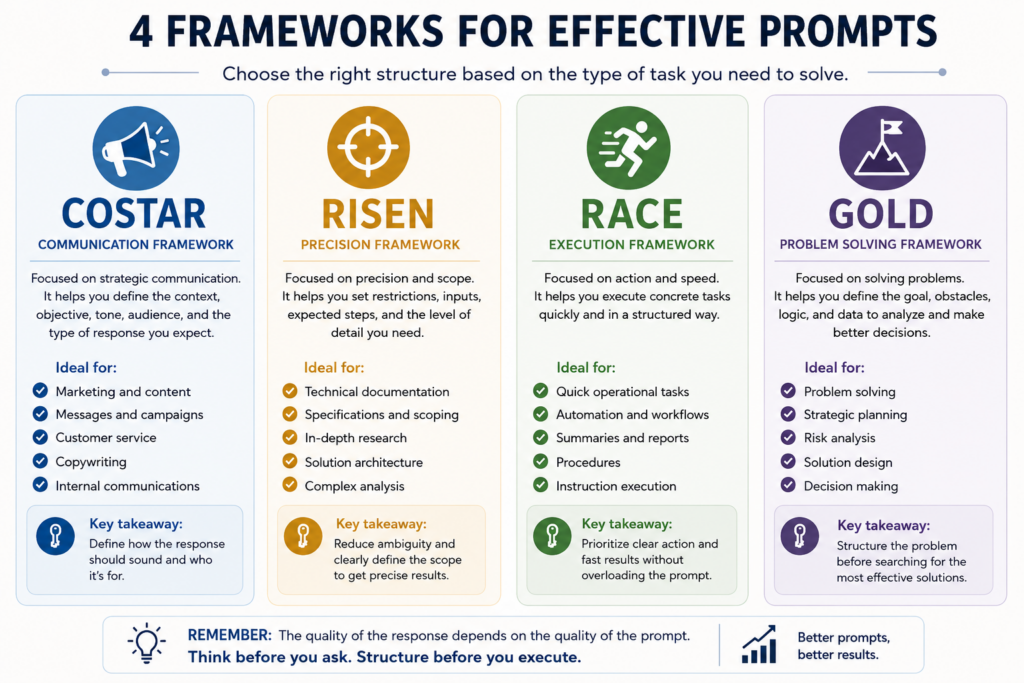

The first one focuses on something fundamental: learning how to structure our instructions more effectively. Because AI does not replace thinking; it amplifies it. The quality of the response will depend directly on the clarity, depth, and precision of what we provide.

If the context is poor, ambiguous, or superficial, the AI will attempt to fill in the gaps on its own. And that is where many problems begin: convincing answers built on weak assumptions or incomplete information.

That is why, in this first section, we will explore four extremely useful structures depending on the kind of task you need to solve: communication, rapid execution, problem-solving, or deep technical analysis.

Framework 1: Structure the prompt better.

COSTAR — Strategic communication and persuasive content

COSTAR works especially well when the main goal is controlling tone, narrative, and communication quality. This framework helps structure prompts focused on marketing, branding, sales, educational content, and customer support.

Its strength lies in forcing you to define not only what you want, but also how it should sound, who it is for, and what kind of response you expect.

When to use it

- Marketing campaigns

- LinkedIn posts

- Commercial emails

- Customer support

- Copywriting

- Educational content

Example prompt

#Context:

You are part of a B2B SaaS company specialized in automation.

#Objective:

Write a LinkedIn post about critical thinking and AI.

#Style:

Professional but conversational.

#Tone:

Reflective and slightly provocative.

#Audience:

Solutions architects and technology leaders.

#Response:

Include a strong introduction, a practical example, and a memorable closing.

RISEN — Constraints, precision, and technical scope

RISEN is useful for tasks where precision matters more than creativity. The focus is on limiting ambiguity and reducing open-ended model interpretations.

It is ideal for technical documentation, deep research, solution architecture, specification generation, or complex tasks where the context must be carefully constrained.

When to use it

- Technical documentation

- Scoping

- Deep research

- Software architecture

- Functional requirements

- Complex analysis

Example prompt

Context:

We need to design a bidirectional contact synchronization between HubSpot and Salesforce.

Instructions:

Identify the main technical and operational risks.

Define the recommended integration architecture.

Explain the key dependencies, including data model, API, authentication, ownership, lifecycle, and deduplication considerations.

Suggest suitable middleware options.

Expected output:

The response should be highly technical, structured, and implementation oriented.

Constraints:

Do not include any options that require Operations Hub Enterprise.

RACE — Fast execution and operational tasks

RACE is designed for speed and operational clarity. It works best when you need AI to execute concrete tasks without excessive conceptual exploration.

It is extremely useful for daily automation, rapid generation of structured content, and repetitive workflows.

When to use it

- Quick tasks

- Operational automation

- Summaries

- Procedures

- Text transformation

- Procedural execution

Example prompt

Role:

Act as a technical project manager.

Action:

Summarize this meeting into actionable bullet points.

Context:

The meeting was about a Salesforce-to-HubSpot migration.

Execute:

Generate:

decisions made

risks

next steps

owners

GOLD — Problem-solving and strategic thinking

GOLD is one of the most useful frameworks for complex problems because it forces the reasoning structure before generating answers.

It is especially valuable for strategic planning, risk analysis, troubleshooting, and decision-making where multiple variables and obstacles exist.

When to use it

- Problem-solving

- Strategic planning

- Risk analysis

- Troubleshooting

- Solution design

- Decision-making

Example prompt

Goal:

Reduce duplicates between HubSpot and Salesforce.

Obstacles:

The systems have inconsistent IDs and multiple sources of truth.

Logic:

Analyze options considering scalability and maintainability.

Data:

500k contacts

bidirectional synchronization

multiple pipelines

existing middleware integration

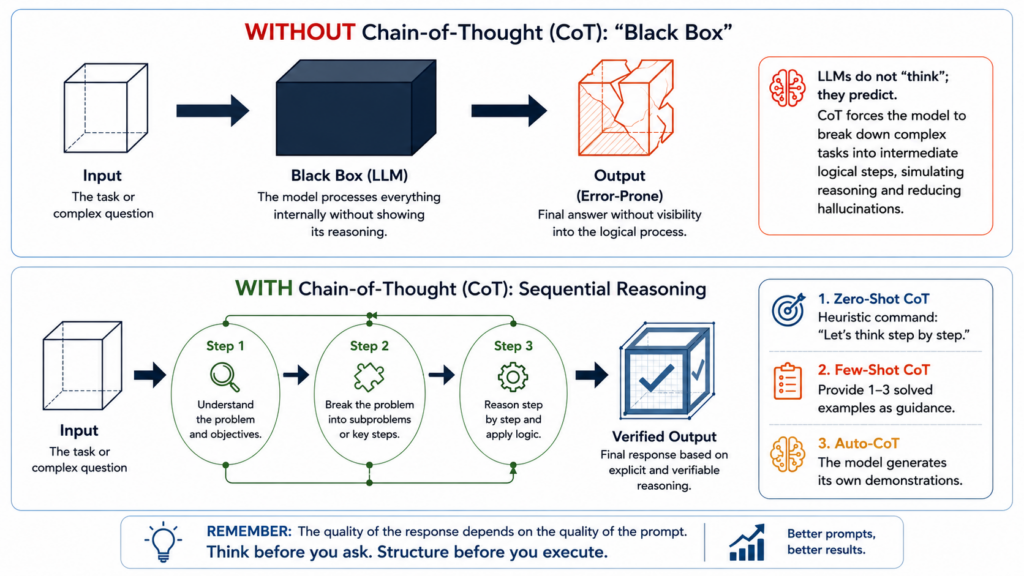

Framework 2: Chain of Thought — dividir antes de responder

Now, what happens when we have a task that is simply too large and we need help solving it?

This is where a very common problem appears: we write one massive prompt, receive one massive response… and then have no clear way to evaluate it. There is too much content, too many assumptions, and too many decisions mixed into a single answer.

That is where Chain of Thought comes in.

First, we need to clarify something important: LLMs do not “think” like people do. They predict the next most probable response based on patterns, context, and instructions.

So when we give them a complex task and simply ask for “the final answer,” we are trusting a prediction without seeing how it was constructed.

With Chain of Thought, we are not trying to make AI “think” in a human sense. What we are doing is asking it to decompose a complex task into logical, visible, and reviewable steps. This helps reduce errors, identify weak assumptions, and avoid answers that sound convincing but fail under careful review.

The difference is enormous.

Simple example

Instead of asking:

Create a HubSpot implementation strategy for this client.

Before giving me the final strategy, break the problem into steps:

Identify the client’s goals.

List the systems involved.

Detect dependencies and risks.

Define assumptions.

Propose a phased strategy.

Explain which areas require validation before moving forward.

After that, generate the final recommendation.

The second prompt not only produces a better answer. It also allows us to audit how the model arrived at that answer.

Evaluation example

We can also use Chain of Thought to review an AI-generated response:

Evaluate this response before improving it:

What assumptions is it making?

Which parts are weakly supported?

What information is missing?

Where could it be hallucinating?

What should be validated with an external source?

Then generate a corrected version.

This approach changes the relationship with AI.

We are no longer saying: “Give me an answer.”

We are saying: “Help me build, review, and improve an answer using judgment.”

And that is the difference between using AI as autopilot and using it as a real thinking tool.

If you made it this far: 10/10. I hope this helps you as much as it helped me.